After lots of starts and stops on this project I’ve finally managed to come to a design that feels workable, and it consists of 3 major layers:

Authoring Layer

I wanted the authoring process to be friendly and “low resolution” so you don’t need to be a geologist, biologist, nor artist to create a world where interesting things can happen.

The authoring layer presents a simple framework for building the environment.

Designing World Objects

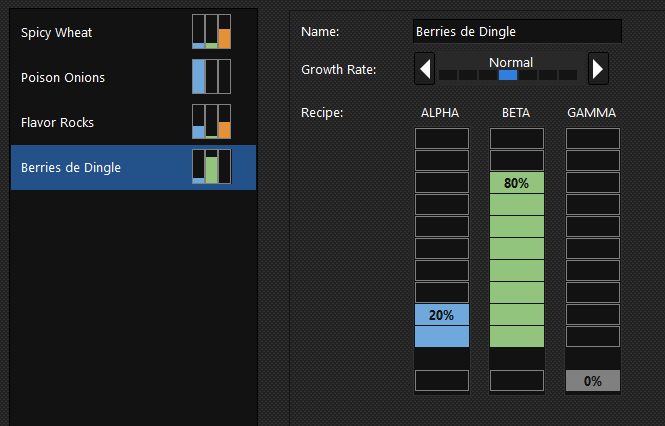

Molecules - A relaxed idea of a building block of all natural resources. Currently there are 4 in the system, but only 3 occur naturally in the environment: Alpha, Beta, and Gamma and the 4th is Biomass but doesn’t participate in the authoring.

Foods - Foods make up the naturally occurring resources in the world. Molecules are combined in unique combinations that present opportunities for differing absorption spectrums in agents, allowing for food pressures.

Here’s an early version of the food designer:

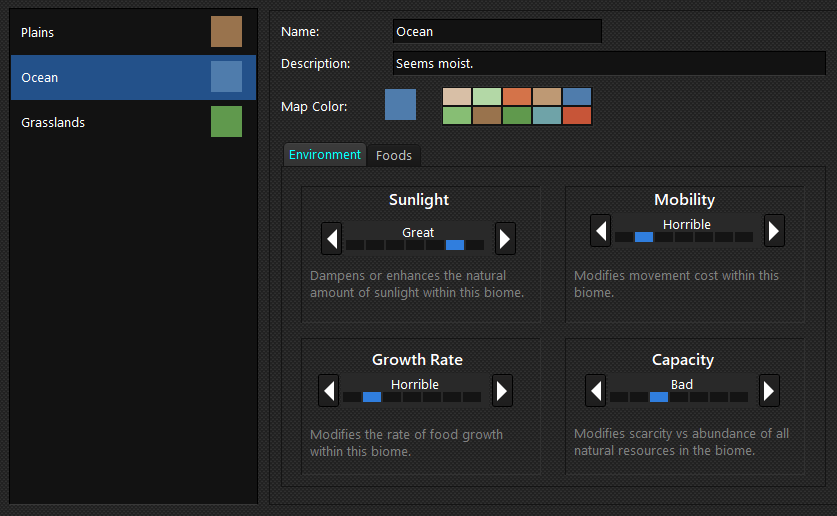

Biomes - a biome is a set of parameters that can be applied to any location within a region to control various environmental aspects.

Here’s an early implementation of the biome designer:

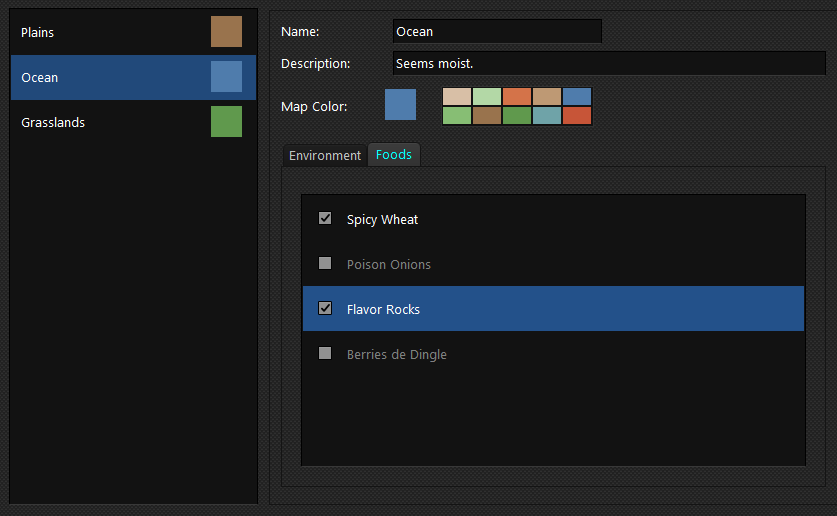

And the Foods page of a biome indicates which foods should be allowed to grow within the biome.

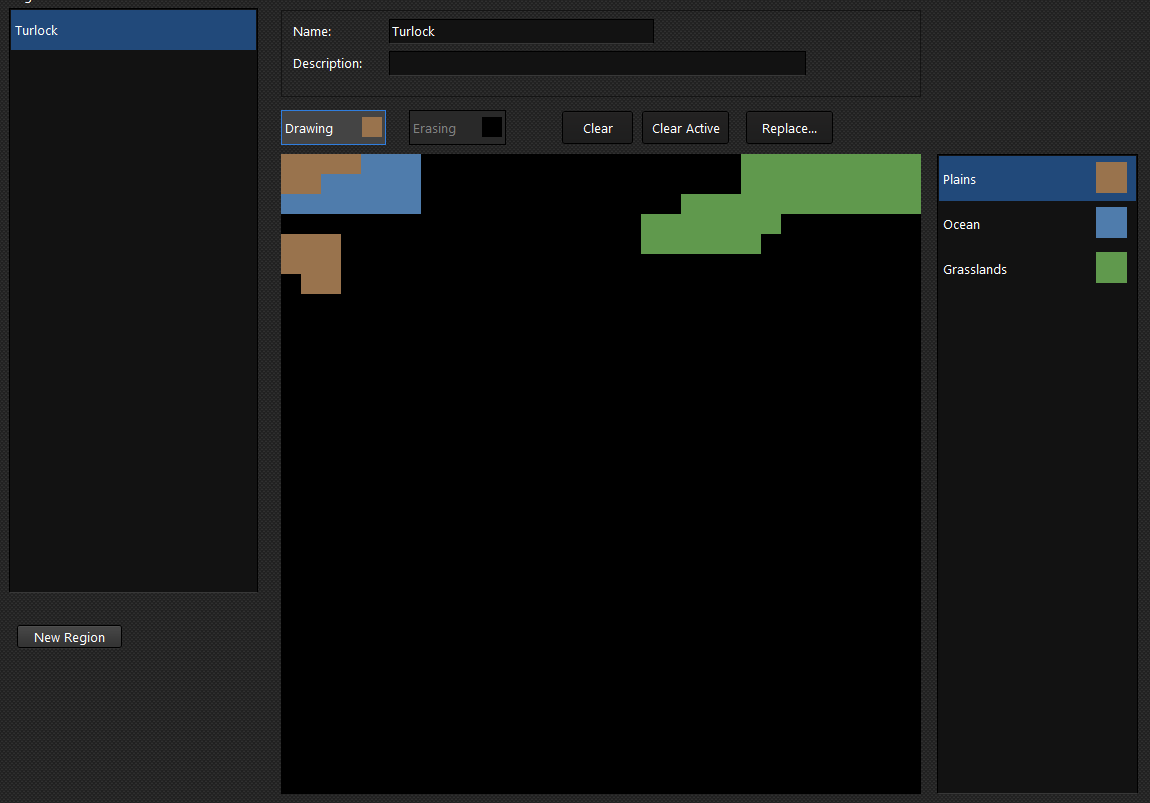

Regions - A region is a 32x32 “pixel” grid in which each cell can be painted with a particular biome.

Here’s a fully ratchet screenshot of the current ideas … you can draw with the mouse and select a color/biome to draw with. An “empty” cell (black) is treated as a default cell, without resources but with “Normal” for the other parameters. Adjustable someday…

Worlds - No editors for this yet, but this will be a 3x3 grid in which you can assign from 1 to 9 regions to cells.

Upscaling Layer

The scale presented by the authoring tools is purposefully low-resolution, but that data will pass through the upscaling layer. This layer is the bridge between the friendly editing concepts like “good” and “better” and the higher resolution “floats everywhere” data for the sim.

Some current thoughts about this layer, which does not exist yet:

- We’ll use a scale factor of 8x, which results in a region of 256x256 cells (one editor “pixel” maps to 8 cells in the sim)

- The upscaler will use noise/randomization/etc to introduce variation and unpredictability.

- We’ll look into biome-border smoothing for a more natural result

Simulation Layer

Throughout human history, little has been known about this layer, and today we know even less.

We know some stuff …

I think I know that I need to go here next … and design this data … so that the upscaler has both an input and an output target.

We’ll see!

]]>